Those looking at the ai engineer market right now are stuck in one of two situations.

They’re a software engineer wondering whether “AI engineer” is a real job or just a trendy relabeling of backend, data, and ML work. Or they’re a founder who knows AI matters, has pressure to ship something useful, and can’t tell whether they need a data scientist, an ML engineer, or someone who can successfully get an AI feature into production without setting money on fire.

The confusion makes sense. The label is broad, and the hype doesn’t help. What does help is looking at what companies are hiring for and what teams need. AI skills now appear in 1.7% of all US job openings in 2024, up from 0.5% in 2010, and 42% of companies deploy AI to gain a competitive advantage, according to Master of Code’s roundup of AI hiring and adoption data. That doesn’t mean every company needs a frontier researcher. It means more companies need builders who can turn AI capability into a working product.

At startups, that distinction matters. A notebook demo is useful for a meeting. A production system that handles messy inputs, failure cases, cost constraints, and user expectations is useful for a business.

That’s where the modern ai engineer sits. The role usually combines software engineering discipline, practical ML judgment, and enough platform sense to deploy, monitor, and improve systems under real operating conditions. If you’re trying to break into the field, that’s good news. You don’t need to look like a research scientist. If you’re hiring, it means the strongest candidates often won’t be the ones with the most theoretical polish. They’ll be the ones who can ship.

The fastest way to get clear on the ai engineer role is to separate it from adjacent titles that often get lumped together.

In startups, titles drift. One company’s “AI engineer” is another company’s “ML engineer” or “applied engineer.” Still, the day-to-day work usually reveals the actual work. Some people spend most of their time exploring data and validating whether an approach works. Others spend most of their time making models reliable inside a product. Those are not the same job, even if they overlap.

Here’s the practical distinction hiring teams usually care about:

| Role | Primary Focus | Core Tasks | Key Deliverable |

|---|---|---|---|

| AI Engineer | Shipping AI-powered product features | Integrate models into apps, design inference flows, handle edge cases, monitor behavior, work with product and engineering | Production AI system users can rely on |

| Data Scientist | Learning from data and testing hypotheses | Analyze datasets, run experiments, prototype models, interpret patterns, communicate findings | Insight, prototype, or recommendation |

| ML Engineer | Scaling model pipelines and ML infrastructure | Train and optimize models, build training pipelines, manage deployment workflows, improve performance and reliability | Production-ready ML pipeline or service |

For candidates, this matters because many portfolios target the wrong audience. A startup hiring manager rarely gets excited by a familiar benchmark project if it says nothing about deployment, observability, API design, or failure handling.

For founders, this matters because vague hiring briefs attract vague candidates. If what you really need is someone who can connect a model to your product stack, manage data flow, and own reliability, writing a spec for a “gen AI wizard” won’t get you there.

The practical question isn’t “Can this person build a model?” It’s “Can this person build a system around a model that survives contact with users?”

That’s the lens worth using through the rest of this guide.

A real ai engineer job at a startup is less about inventing new algorithms and more about making AI useful under constraints.

That means taking a model, or a workflow built around models, and wiring it into a product so it performs consistently enough for customers and cheaply enough for the business. The work sits in the messy middle between experimentation and software delivery.

On most startup teams, an ai engineer spends time on a mix of tasks like these:

The candidates who do this well usually think like engineers first. They care about reliability, maintainability, and trade-offs. They know that a slightly less elegant approach that can be tested, deployed, and debugged often beats a more complex one that nobody can support.

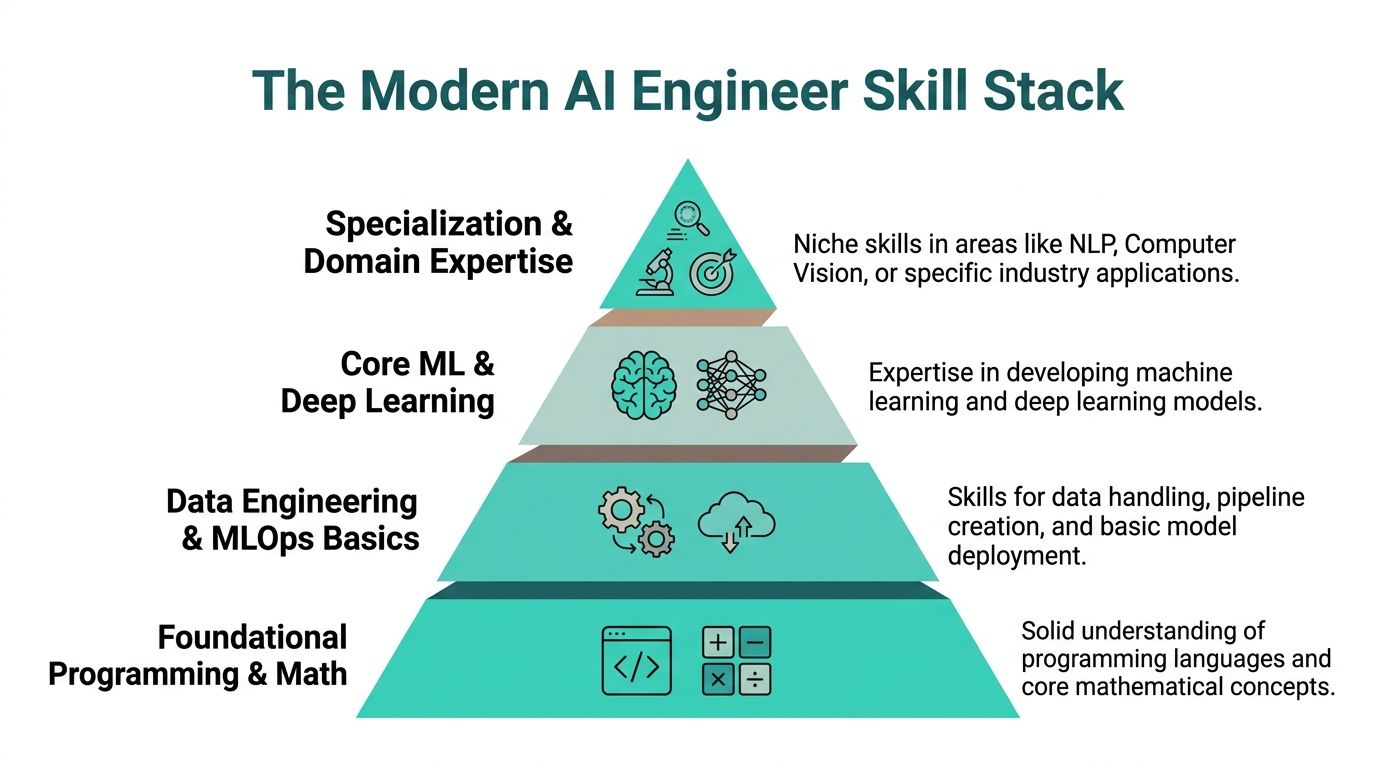

The cleanest way to evaluate an ai engineer is to look at skills in layers.

| Skill Layer | What it includes | Why startups care |

|---|---|---|

| Foundational software skills | Python, APIs, version control, testing, debugging, systems thinking | Without this, AI work stays trapped in notebooks |

| Core AI and ML competencies | Supervised and unsupervised learning, neural networks, deep learning, reinforcement learning, model evaluation | This is how engineers choose and improve approaches instead of guessing |

| Systems and operations skills | Cloud deployment, distributed processing, observability, performance tuning, data pipelines | This is what turns a prototype into a product capability |

That top layer often gets overlooked by candidates. It shouldn’t. Startups feel the pain there first.

The technical expectations are also more concrete than people assume. AI engineers need to understand advanced ML techniques, including supervised and unsupervised learning, neural networks, deep learning, and reinforcement learning. They also need fluency in evaluation concepts like accuracy, precision, recall, and F1 score. In production environments, they often use TensorFlow or PyTorch, rely on NumPy and Pandas for analysis, and use Apache Spark for distributed processing on large cloud data lakes. Splunk notes that Spark-based workflows can reduce training time from days to hours in the right setup, which matters when teams need to iterate quickly on large datasets in production settings, as described in Splunk’s guide to AI engineering.

What fails most often is hiring or presenting for the role as if model quality alone is the job.

A candidate who can explain architectures but can’t explain rollout risk, observability, API boundaries, or data drift usually struggles in startup environments. A founder who hires for “AI brilliance” and ignores infrastructure ownership often ends up with a fragile feature nobody wants to maintain.

Strong ai engineers bridge two worlds. They understand model behavior, but they also understand what breaks at 2 a.m. after launch.

That blend is the job.

The strongest ai engineers don’t build their careers from the top down. They build from the bottom up.

A lot of candidates want to start with the most glamorous layer. They jump to LLM frameworks, agent tooling, or fine-tuning tutorials before they can write clean Python, reason about data flow, or package a service for deployment. That almost always shows up in interviews.

This hierarchy is a better way to think about the role:

The base layer is still software engineering and math. That means writing readable Python, understanding data structures, debugging ugly failures, and being comfortable with core mathematical concepts well enough to reason about model behavior.

If you can’t explain why your pipeline failed, why your model overfit, or why your service times out under load, you’re not ready for most startup AI roles, even if your demo looked impressive.

The next layer is where candidates begin to separate themselves. At this stage, data engineering and MLOps basics become relevant.

A portfolio project gets much stronger when it shows things like:

That’s what startup teams mean when they say they want someone who can “own the full lifecycle.”

Practical rule: If your project ends when the model trains, it doesn’t show enough. If it starts when messy data enters the system and ends when a user gets a reliable result, you’re much closer.

Above that sits model knowledge. Top ai engineers know frameworks like TensorFlow and PyTorch, use NumPy and Pandas for analysis, and can work with large-scale data using Apache Spark on cloud data lakes. In the right environment, that stack can cut training time from days to hours, which is one reason startups value engineers who can move comfortably between modeling and systems work.

At the top is specialization. That might be NLP, computer vision, ranking systems, recommendation infrastructure, or industry-specific workflows in fintech, health, or operations software.

A high-impact portfolio project usually tells a simple story. Not “I trained a model.” More like this: a candidate built a support-ticket classifier, exposed it through FastAPI, containerized it, logged prediction failures, documented known edge cases, and added a simple fallback path for low-confidence results. That one project says more than five notebook-only repos.

What works in startup hiring is evidence of end-to-end thinking. What doesn’t work is stacking buzzwords without showing operational judgment.

A founder opens your portfolio after a referral. They have ten minutes before the next meeting and one question in mind. Can this person ship AI features that survive contact with real users?

That standard rules out a lot of otherwise smart candidates. Tutorial repos, Kaggle-style notebooks, and vague claims about “building with LLMs” do not help a hiring team picture you inside a startup. A strong portfolio makes that picture easy. It shows judgment, scope control, product sense, and enough engineering discipline that another person could maintain what you built.

A clean personal site helps. If you need a practical reference for structure, presentation, and what to include, this guide on how to create digital portfolio is useful because it focuses on clarity rather than visual clutter.

The best portfolio projects read like small production case studies.

They usually include a model, but the model is not the headline. The headline is the system around it and the decisions behind it. Startup teams want evidence that you can turn messy inputs into a working feature, then explain what you would watch, where it could fail, and what trade-offs you made to ship on time.

Good examples include:

This is the gap many applicants miss. They present AI work as research output. Startups hire for product and operational output. That difference matters even more now that many early-stage teams need engineers who can handle inference services, evaluation loops, prompt or model versioning, and basic platform reliability without waiting for a separate ML ops team. It is one reason curated startup talent networks such as Underdog.io often see demand centered on builders who can connect models to production systems, not just experiment in notebooks.

The strongest portfolio does not try to look impressive at all costs. It proves you can make sensible calls with limited time, limited data, and imperfect tooling.

I pay attention to choices like these:

Those details signal maturity. Founders notice them because they live with trade-offs every week. Engineers notice them because they know polished demos can hide fragile systems.

A good project page should answer four questions clearly:

One more point. Do not overstuff your portfolio. Three solid projects with clear write-ups beat eight shallow repos every time.

Good portfolios make it easy for a startup to say yes to an interview. Great ones make the hiring manager start mapping you to actual problems on the team.

A common startup hiring scenario looks like this. Two candidates can both fine-tune a model. One has only research-heavy project work. The other has shipped inference services, handled incidents, and worked with product on what should and should not be automated. The second candidate usually gets the stronger offer, even if the model work looks less flashy on paper.

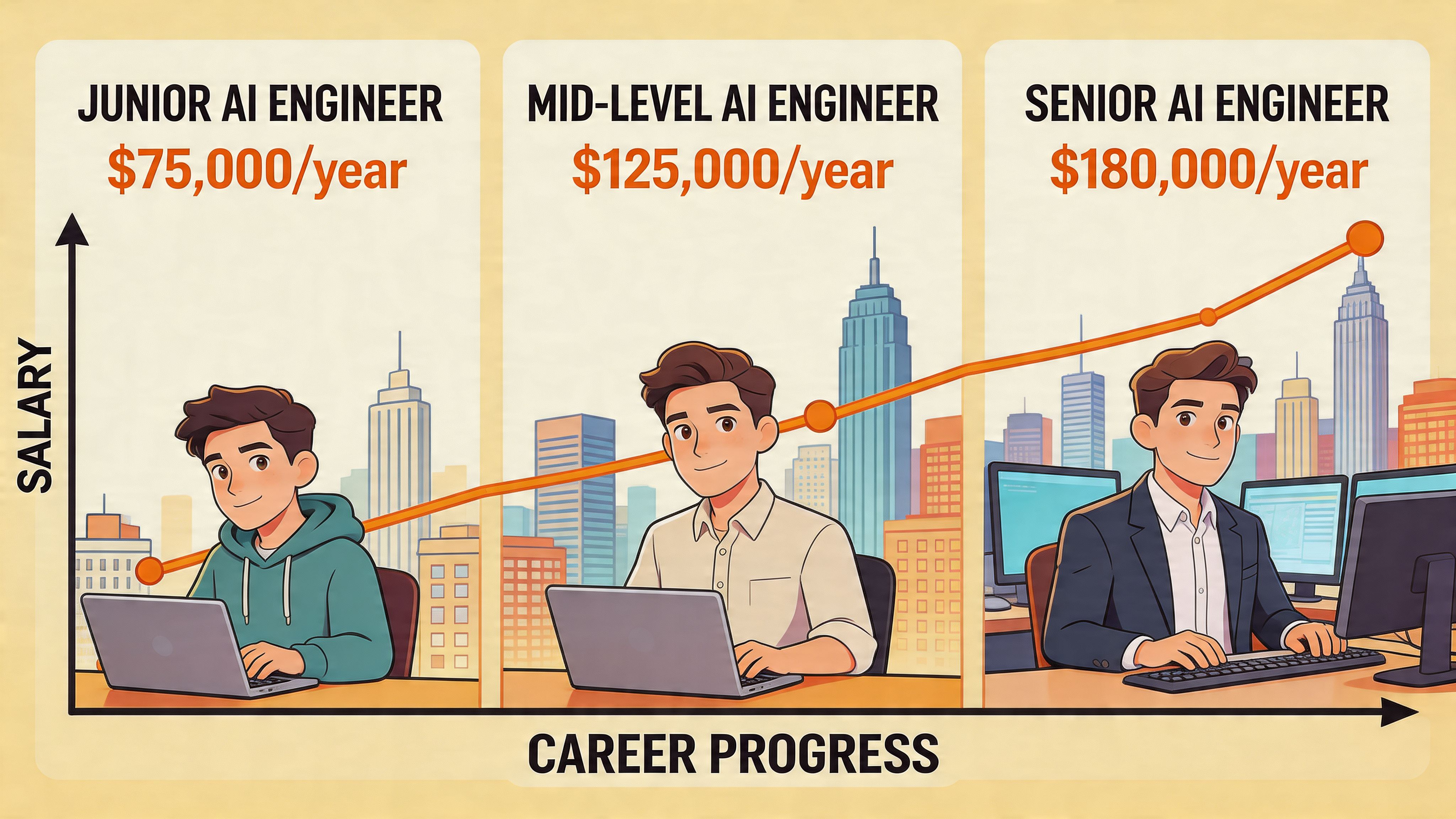

That pattern shapes both career growth and compensation.

AI engineer pay is strong by general software standards, but the title alone does not determine the package. Startups pay for problem ownership. An engineer who can connect model behavior to latency, cost, reliability, and product outcomes will usually command better scope and better upside than someone hired to stay narrowly focused on experimentation.

Early-stage companies rarely level AI engineers by years of experience alone. They level by trust.

| Stage | What the engineer is trusted to do |

|---|---|

| Early IC | Ship product features, adapt existing models, write production code with support |

| Independent IC | Own services end to end, improve reliability, choose evaluation and deployment approaches |

| Senior or lead | Design architecture, set standards, make cost, latency, and quality trade-offs across teams |

| Staff, architect, or player-coach | Set technical direction, connect AI work to business priorities, mentor engineers and influence hiring |

The fastest career path usually runs through platform and operations ownership. In startup hiring, I see stronger demand for engineers who can build the surrounding system than for candidates who only want to tune models. That includes data pipelines, inference infrastructure, evaluation loops, observability, and failure handling. Underdog.io and similar startup hiring networks see this firsthand because founders are hiring for production problems, not abstract AI potential.

Compensation rises when the engineer reduces execution risk for the company.

That can mean owning a retrieval pipeline that cuts bad outputs in production. It can mean cleaning up evaluation so the team stops shipping on gut feel. It can mean building guardrails and monitoring so customer-facing AI features stop breaking imperceptibly. Founders will stretch on salary and equity for people who make the system dependable.

Title inflation also muddies salary comparisons. A "senior AI engineer" at a 20-person startup may be doing staff-level work across platform, backend, and product. At a larger company, the same title may cover a narrower lane. Candidates should compare scope before they compare cash.

Equity matters too. So does refresh policy, expected hours, on-call burden, and whether the role sets you up to own a core product surface. For a grounded way to compare offers, review these startup compensation benchmarks for salary and equity trade-offs.

For engineers, the practical takeaway is straightforward. Career momentum comes from becoming the person a startup can trust to ship, run, and improve AI systems under real constraints.

For founders, the hiring takeaway is just as clear. If you want the strongest AI hires, pay for production ownership, not for title keywords.

Most candidates don’t lose startup ai engineer opportunities because they lack raw ability. They lose them because they present themselves like academic generalists when the company needs a practical builder.

The market signal is clear. There’s an acute shortage of AI engineers with specialized platform and AI operations skills, and 68% of companies report they can’t find qualified candidates, according to this analysis of AI-safe tech roles and hiring gaps. That’s the opening. If you can demonstrate infrastructure, deployment, and operational ownership, you’re solving a real hiring problem.

Your resume should read like an engineer who ships, not a student of AI.

That means emphasizing:

If you’ve worked at startups before, the way you frame that experience matters. This guide on Startup Experience on a Resume is useful because it shows how to present ambiguity, speed, and ownership in a way hiring teams understand.

Founders often make the opposite mistake. They over-index on resume keywords and under-test real judgment.

A better interview loop usually includes:

A scoped systems conversation

Ask how the candidate would build an AI feature into your current stack. Listen for data flow, monitoring, edge cases, and fallback paths.

A practical take-home

Don’t ask for a heroic build. Ask for a small service, an evaluation plan, or a debugging exercise that mirrors your actual work.

A failure analysis interview

Ask about a production issue, bad model behavior, or a deployment problem. Strong candidates get specific fast.

A collaboration screen

Can this person explain trade-offs to product or leadership without hiding behind jargon?

The best startup ai engineer interviews sound less like oral exams and more like design reviews.

General job boards create noise for both sides. Candidates apply into a pile. Founders sort through profiles that may look relevant but aren’t startup-ready.

Curated marketplaces can help when they screen for actual fit, not just titles. One example is Underdog’s ai engineer jobs marketplace, which connects vetted candidates with startups and high-growth teams. That kind of channel is most useful when you want fewer, better-aligned conversations rather than broad applicant volume.

For candidates, the goal is to look like someone who can join a small team and increase shipping speed without creating operational debt. For founders, the goal is to hire someone who lowers execution risk, not just someone who sounds current.

A great ai engineer hire usually doesn’t announce itself through prestige signals alone. The strongest startup hires tend to be people who can take a fuzzy business need, turn it into a concrete spec, and build something resilient enough to survive real usage.

That’s why mature hiring processes focus on work quality before pedigree.

Expert AI engineers define precise input and output expectations before they build. That practice matters more than most founders realize. According to Galileo’s overview of specification-first AI development, clear specs can prevent 40% to 60% of downstream bugs, and spec-driven projects can reduce development time by 30% to 50%.

In practical terms, a strong candidate should be able to answer questions like these:

If a candidate jumps straight to tools and architecture without tightening the problem definition, that’s a warning sign.

A startup rarely loses because it chose the wrong framework first. It loses because nobody defined success tightly enough for engineering to execute cleanly.

A good founder hiring loop is simple and grounded.

| Hiring step | What to evaluate |

|---|---|

| Portfolio or resume review | Evidence of shipped systems, not just experiments |

| Technical screen | Ability to reason about architecture, data flow, and failure handling |

| Practical exercise | Judgment under realistic constraints |

| Final conversation | Communication, prioritization, and startup fit |

Use take-homes that resemble work the candidate would do on the job. Ask for a lightweight design doc, a service stub, an evaluation plan, or a debugging memo. Avoid tasks that reward free labor or trivia-heavy performance.

If you want to source candidates already filtered for startup environments, Underdog’s AI engineer hiring page is one route to review.

The broader lesson for both sides is the same. The ai engineer role belongs to people who bridge theory and implementation. Candidates win by proving they can operate under production constraints. Founders win by hiring for practical judgment, system ownership, and clarity of thought.

If you’re exploring startup AI roles or hiring for them, Underdog.io offers a curated marketplace focused on startup-ready tech talent. Candidates can apply once and get matched with vetted companies. Founders can review pre-screened profiles built for early-stage and high-growth teams.