The world of big data is often defined by its massive scale and complexity, making it difficult for tech professionals to identify the most promising career opportunities. From serverless data warehouses to unified analytics platforms, a handful of influential companies are shaping the industry, creating high-impact roles for engineers, product managers, and data scientists. This guide cuts through the noise to provide a focused look at the top big data companies actively hiring in key tech hubs like NYC and San Francisco, as well as for remote positions.

We'll break down what makes each company a compelling place to work, from its core technology to its funding stage and company culture. For each platform, you will find concise summaries, details on typical data roles, and practical tips for making your application stand out. This roundup is designed to give you a clear, actionable roadmap for your job search. To further explore the dynamic landscape of data intelligence and AI, readers can gain valuable perspectives from resources like insights from Parakeet AI's blog. Our goal is to equip you with the specific information needed to find a role that aligns with your skills and career ambitions in the ever-growing data ecosystem.

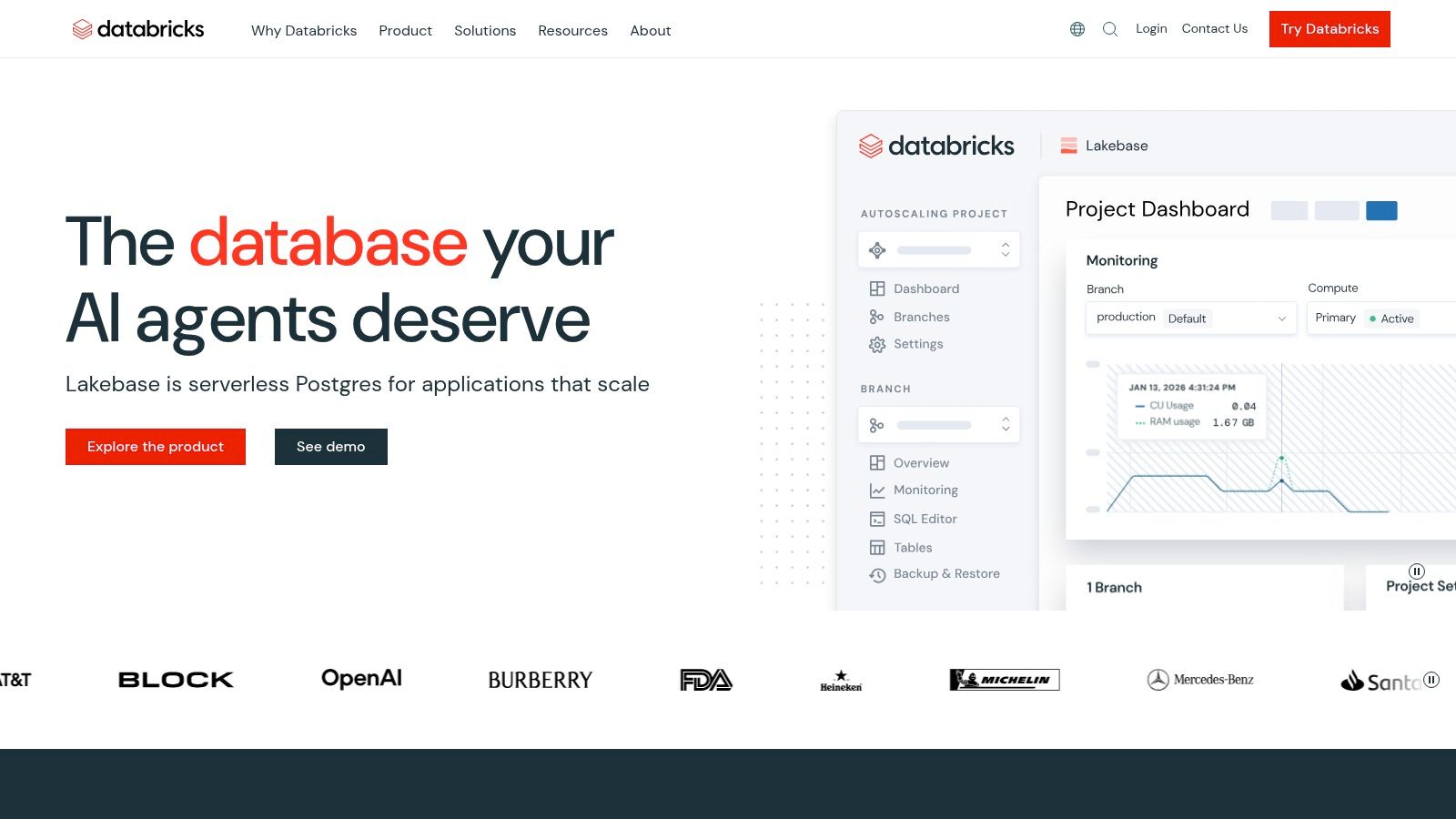

Databricks has established itself as a central player among big data companies by popularizing the "lakehouse" architecture. This model merges the cost-effective, open-format storage of a data lake with the reliability and performance features of a data warehouse. For data professionals, this means a single platform for data engineering, business intelligence (BI), and machine learning (ML), which reduces the need to stitch together multiple, disparate tools.

The platform is built on Apache Spark, providing a powerful engine for processing massive datasets. A practical example is a retail company using Databricks to ingest terabytes of raw clickstream data into a Delta Lake table. This provides ACID transactions, ensuring that machine learning models for product recommendations are trained on consistent, high-quality data. Unity Catalog then provides a unified governance layer, allowing them to control access to sensitive customer data across all teams.

For candidates, particularly those interested in ML and data engineering, Databricks represents an opportunity to work at the core of a widely adopted data ecosystem. The company is known for its strong engineering culture, stemming from its academic roots at UC Berkeley's AMPLab.

Website: https://databricks.com

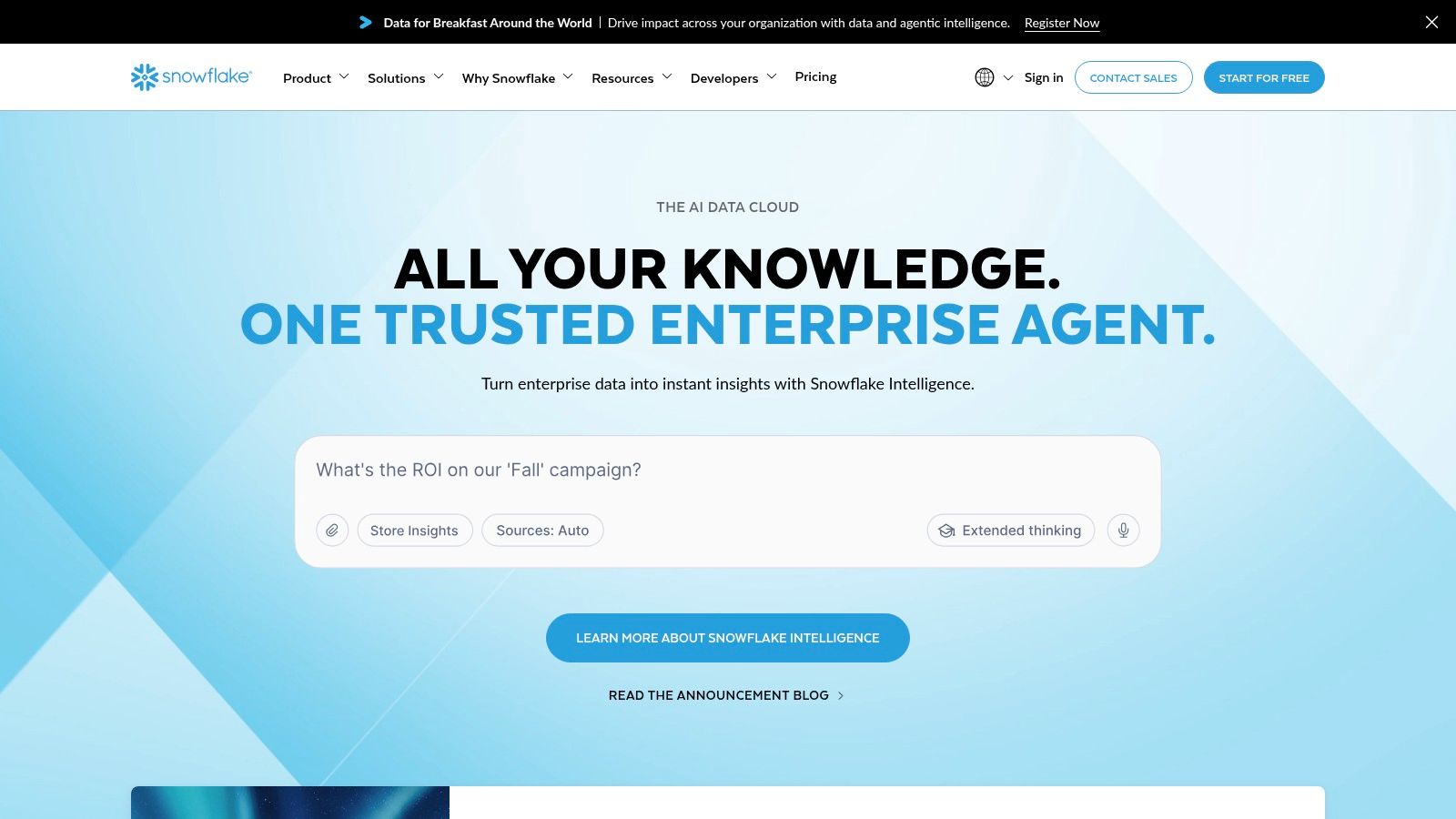

Snowflake has become a dominant force among big data companies by pioneering the "Data Cloud" concept. Its platform provides a fully managed service that separates storage and compute, allowing teams to run data warehousing, engineering, and data science workloads without interfering with each other. This architecture is known for its near-zero operational overhead, making it incredibly fast for organizations to get started with SQL analytics and complex data applications.

A key differentiator is its seamless data sharing and marketplace capabilities. For example, a CPG company can securely access live retail sales data from a partner directly in their own Snowflake account without any ETL, enabling near real-time sales forecasting. The introduction of Snowpark has also expanded its appeal beyond SQL, enabling developers to build and deploy Python code for ML model training directly within Snowflake, keeping the data secure and governed.

For job seekers, Snowflake offers a chance to work on a platform that has fundamentally changed how companies approach data analytics. The company is a prime example of product-led growth and is consistently ranked among the best tech companies to work for due to its strong market position and engineering-centric culture.

Website: https://www.snowflake.com

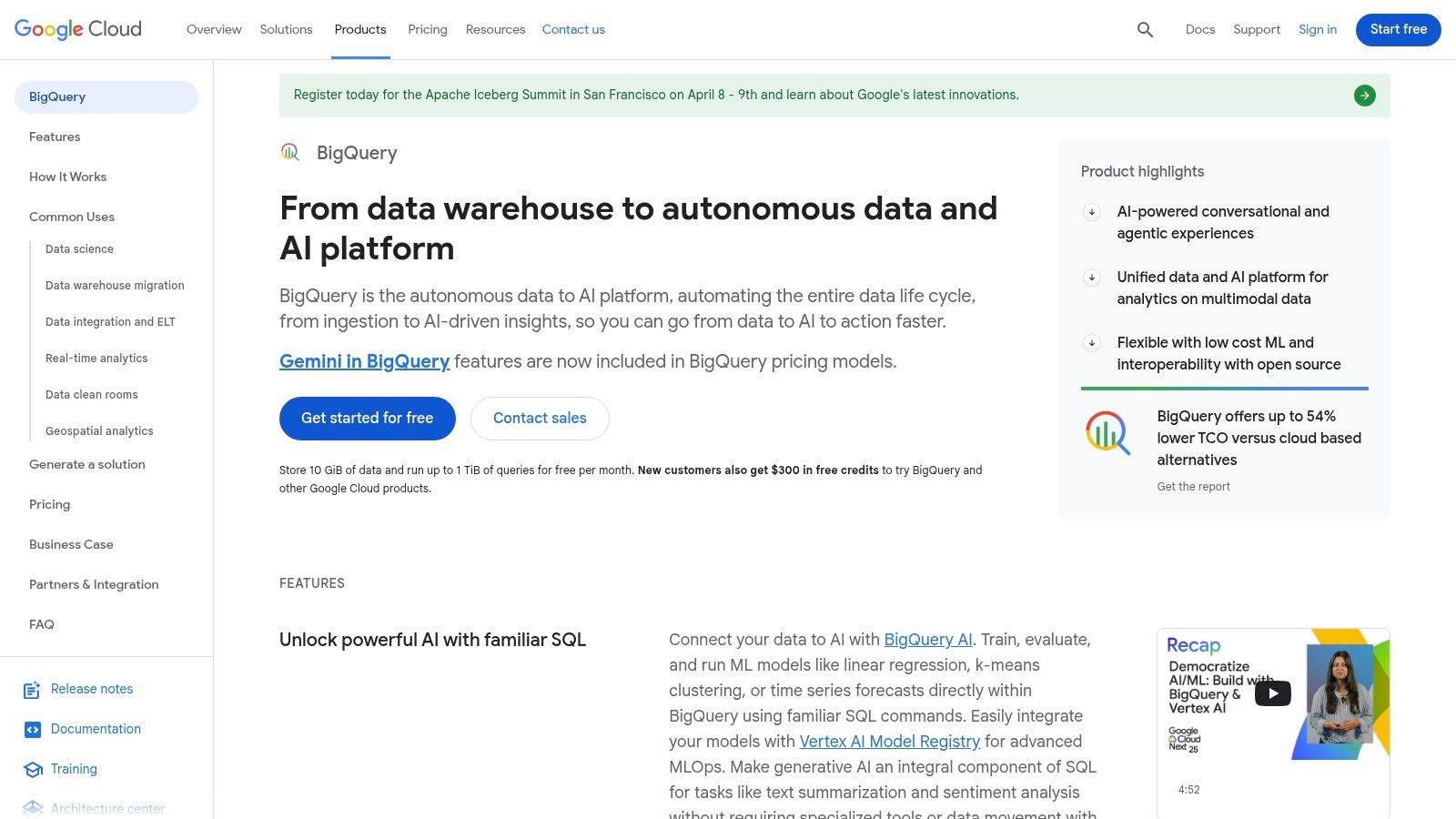

As one of the original serverless data warehouses, Google BigQuery remains a dominant force among big data companies by offering a fully managed, petabyte-scale analytics platform. Its core design separates storage from compute, allowing teams to analyze enormous datasets with familiar SQL syntax without managing any infrastructure. This low-operational model makes it an excellent choice for businesses that want to get from data to insights quickly.

The platform’s power lies in its autoscaling capabilities and tight integration with the Google Cloud Platform (GCP) ecosystem. A gaming company, for instance, can use BigQuery ML to build a churn prediction model directly on player telemetry data with a single SQL query. Features like Gemini assistance help analysts optimize complex joins on the fly, reducing query costs and improving performance. Its flexible pricing accommodates both exploratory ad-hoc queries from a marketing team and predictable, high-volume dashboard refreshes.

For engineers and analysts, working on or with Google BigQuery means operating at a massive scale within one of the most mature cloud environments. It provides a chance to solve complex data problems for a platform that underpins the analytics of thousands of global companies, from startups to Fortune 500 enterprises.

Website: https://cloud.google.com/bigquery

Microsoft Azure Synapse Analytics is a unified analytics service designed to accelerate time to insight from all data sources. It stands out by combining data integration, enterprise data warehousing, and big data analytics into a single, managed environment. For organizations heavily invested in the Microsoft ecosystem, Synapse offers a cohesive experience, bridging the gap between data pipelines, serverless SQL queries on data lakes, and powerful Apache Spark clusters.

The platform’s main advantage is its integrated nature. A manufacturing company can use Synapse Studio to create a pipeline that pulls IoT sensor data into a data lake, process it with a Spark notebook for anomaly detection, and then serve the results to an executive dashboard in Power BI—all without leaving the workspace. This consolidation reduces the tool fragmentation often seen in other data stacks and simplifies governance and security through Azure Active Directory.

Working on the Azure Synapse team at Microsoft places you at the center of a major cloud provider's data strategy. It’s an opportunity to build and scale a platform that serves thousands of enterprise customers, from small businesses to Fortune 500 corporations, solving complex data challenges.

Website: https://azure.microsoft.com/services/synapse-analytics

Amazon EMR (Elastic MapReduce) is a foundational service in the cloud for running large-scale open-source data processing frameworks. Instead of offering a single, opinionated platform, EMR provides the flexibility to run popular engines like Apache Spark, Hive, Presto, and Flink directly on AWS infrastructure. This makes it a go-to choice for companies with existing big data workflows or those that require deep customization and control over their data processing environments.

The service integrates tightly with the AWS ecosystem, using S3 for persistent storage and the AWS Glue Data Catalog for metadata management. A bioinformatics company might use EMR to run a custom genomics processing pipeline on a cluster of EC2 spot instances, dramatically reducing compute costs. EMR's Serverless option allows a marketing tech firm to handle unpredictable spikes in ad impression data processing without managing any clusters. This versatility allows organizations to balance performance, cost, and operational overhead.

A role focused on Amazon EMR places you at the intersection of cloud infrastructure and big data processing. It's an ideal environment for engineers who enjoy configuring, optimizing, and scaling distributed systems. Since EMR is used by a massive number of companies, from startups to large enterprises, the skills are highly transferable and in constant demand.

Website: https://aws.amazon.com/emr

Confluent Cloud has cemented its position among big data companies by offering Apache Kafka as a fully managed, cloud-native service. It addresses the significant operational burden of running Kafka clusters, allowing engineering teams to focus on building real-time data pipelines and streaming applications. The platform is centered on event streaming, which is critical for use cases like real-time analytics, fraud detection, and customer experience personalization.

The service abstracts away the complexities of cluster provisioning, scaling, and maintenance. For example, a fintech company can use Confluent's fully-managed Flink service to build a streaming application that detects fraudulent transactions in milliseconds, all without managing servers. A rich ecosystem of over 120 pre-built connectors enables an e-commerce platform to stream database changes directly into Kafka for real-time inventory updates. The integrated Schema Registry ensures that data formats remain consistent as applications evolve.

A role at Confluent means working on the technology that underpins the central nervous system of modern data-driven enterprises. The company was founded by the original creators of Apache Kafka, giving it a deep-rooted engineering identity focused on distributed systems and real-time data.

Website: https://www.confluent.io

Cloudera holds a foundational position among big data companies, particularly for enterprises managing complex, hybrid environments. The Cloudera Data Platform (CDP) is a data lakehouse that uniquely spans on-premises data centers and public clouds (AWS, Azure, GCP), providing a consistent data management and analytics layer across them all. This is crucial for organizations in regulated industries or those with significant legacy infrastructure that cannot move entirely to the cloud.

CDP integrates a wide array of open-source technologies, offering services for data engineering, data warehousing, and machine learning. Its key differentiator is the Shared Data Experience (SDX), which centralizes security and governance. For instance, a large bank can use SDX to enforce a single data access policy for customer information, whether an analyst is querying it with Impala on-premises or a data scientist is training a model with Spark in the cloud. While it can have more operational overhead, its control and hybrid flexibility are unmatched for certain enterprise needs.

A role at Cloudera offers engineers a chance to solve complex distributed systems problems at a massive scale for some of the world's largest organizations. It's an environment where you work deeply with the open-source Hadoop ecosystem and its modern successors, navigating the challenges of hybrid cloud deployments.

Website: https://www.cloudera.com

Navigating the ecosystem of big data companies requires more than just knowing names; it demands a deep understanding of the platforms that power modern data architectures. Throughout this guide, we've examined the foundational tools that organizations from nimble startups to global enterprises build upon. From Databricks' unified approach to data and AI in their lakehouse platform to Snowflake's accessible Data Cloud, each company offers a distinct vision for managing and interpreting massive datasets. We also explored the serverless power of Google BigQuery, the integrated analytics of Azure Synapse, and the managed framework flexibility of Amazon EMR.

For those focused on real-time data, Confluent Cloud provides a robust streaming solution, while Cloudera’s hybrid platform addresses complex, on-premises and multi-cloud needs. Recognizing the specific problems these platforms solve is the first step toward positioning yourself as a valuable candidate. Your goal should be to move beyond surface-level familiarity and develop project-based expertise.

The path from learning about these platforms to landing a role at one of the premier big data companies is built on practical application. Your next steps should focus on translating theoretical knowledge into demonstrable skills.

Gaining expertise in these platforms makes you a highly attractive candidate not just for the companies that build them, but for the thousands of other organizations that use them. After gaining expertise in these platforms, you might be ready to explore available remote jobs in the big data field. Ultimately, your ability to connect a company's business problems to a specific technological solution is what will set you apart and open doors to your next great opportunity.

Big data companies are organizations that build or operate platforms specifically designed to store, process, analyze, and derive insights from extremely large and complex datasets — typically at a scale that traditional databases can't handle. This includes cloud data warehouse providers like Snowflake, lakehouse platforms like Databricks, streaming infrastructure like Confluent, and managed analytics services from cloud hyperscalers like Google, Microsoft, and Amazon. The term also applies broadly to companies across every industry that rely heavily on large-scale data infrastructure to run their business.

The most sought-after big data companies for tech professionals in 2026 include Databricks, Snowflake, Google (BigQuery), Microsoft (Azure Synapse Analytics), Amazon Web Services (EMR), Confluent, and Cloudera. Each offers distinct technical challenges and career growth paths. Databricks and Confluent are particularly popular among engineers who want to work on open-source-rooted, high-impact platforms, while Snowflake and Google BigQuery attract candidates drawn to product-led, cloud-native environments. The best fit depends on whether you're more interested in building the platforms themselves or solving complex data problems at scale as a practitioner.

Data engineers and machine learning engineers are consistently the highest-demand roles across big data companies, followed closely by data scientists, analytics engineers, cloud architects, and site reliability engineers. Companies building data platforms also hire heavily for solutions architects and developer advocates who can bridge technical depth with customer communication. As AI capabilities become embedded in data platforms, roles at the intersection of data engineering and ML ops are growing especially fast.

The most valuable technical skills for landing a role at a big data company include proficiency in SQL and Python, hands-on experience with distributed processing frameworks like Apache Spark or Flink, familiarity with cloud platforms (AWS, GCP, or Azure), and a solid understanding of data modeling and pipeline architecture. For more specialized roles, experience with stream processing, data governance, Kubernetes, or ML infrastructure can set a candidate apart. Beyond technical skills, the ability to explain architectural tradeoffs clearly — for example, when to use a serverless data warehouse versus a managed cluster environment — is something hiring managers at these companies specifically test for.

The core difference is the scale and complexity of the data infrastructure involved. Big data companies build and operate systems that process petabytes of data, often in real time, across distributed clusters in multiple cloud regions. The engineering challenges — distributed consistency, fault tolerance, query optimization at massive scale — are fundamentally different from those at companies running standard web applications. For professionals, this often means steeper technical interviews focused on systems design and distributed computing, along with a stronger emphasis on performance and cost optimization in day-to-day work.

Many big data roles, particularly in engineering and data science, are remote-friendly. The cloud-native nature of modern data infrastructure means most day-to-day work happens through browser-based interfaces and code editors rather than in-person collaboration. Companies like Databricks, Snowflake, and Confluent all have significant remote workforces. That said, hybrid expectations vary by team and seniority, and some companies have pulled remote employees back toward office hubs in recent years — so it's worth clarifying work location policies during the interview process.

Salaries at big data companies rank among the highest in tech. Data engineers typically earn between $120,000 and $180,000 in base salary, depending on experience and location, with senior and staff-level roles at companies like Databricks and Snowflake pushing well above that. Machine learning engineers often command similar or higher ranges given the current demand for AI and ML expertise. Total compensation at later-stage companies frequently includes meaningful equity, and at cloud hyperscalers like Google and Microsoft, additional bonuses and benefits can make total packages significantly higher than base salary alone.

The most practical path is to build project-based experience on the platforms you want to work with. Most big data companies offer free tiers or sandbox environments — Databricks Community Edition, Snowflake's 30-day trial, and Google BigQuery's free tier are good starting points. Building a project that processes a real dataset, documenting your architecture decisions on GitHub, and sharing it publicly demonstrates initiative and practical skill in a way that certifications alone don't. Pursuing cloud certifications (AWS, GCP, or Azure data specializations) also helps signal foundational knowledge to hiring teams, particularly for roles at companies heavily invested in those cloud ecosystems.

Tired of sending resumes into the void? Let top big data companies come to you. Underdog.io flips the script on job searching by matching you directly with hiring managers at high-growth tech companies and startups looking for your specific data skills. Stop applying and start interviewing by creating your free profile on Underdog.io today.