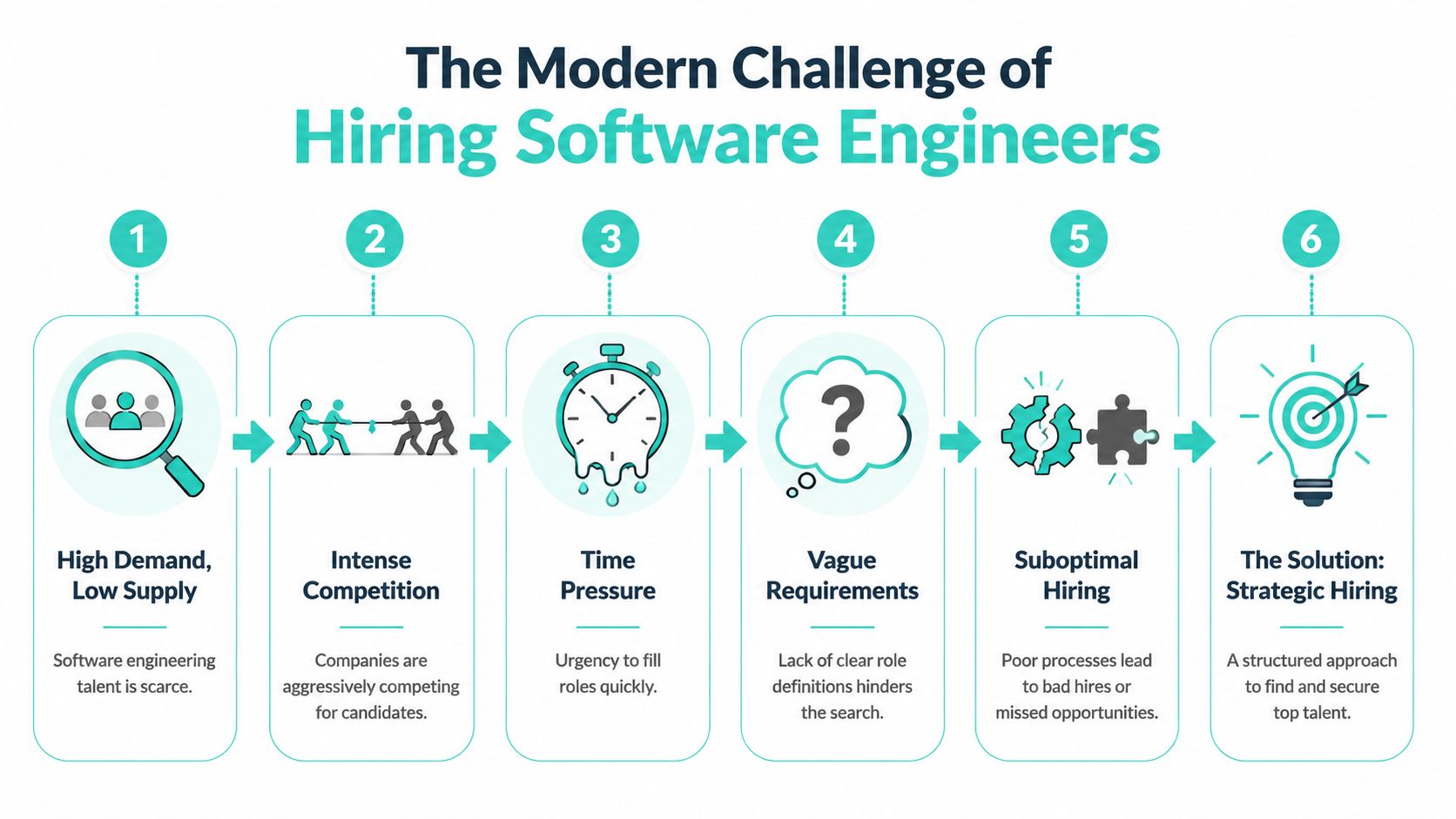

You probably know the feeling. You open your ATS and see a pile of applications, half of them barely relevant, a few strong on paper, and almost none that make you think, "Yes, this person can walk into our codebase and help us ship." Meanwhile, the role has been open long enough that the team is feeling it. Product wants speed. Engineering wants quality. Finance wants discipline. Candidates want clarity and a fast process.

That tension is normal at a startup. It also explains why a lot of hiring processes fail. The problem usually isn't effort. It's that teams start sourcing before they've defined the role, interview before they've aligned on standards, and make offers before they've sold the mission properly.

How to hire software engineers well comes down to one principle. Treat hiring like product development. Define the problem clearly, design a process that produces signal, and remove steps that create noise.

Startup hiring gets harder when every open role carries outsized weight. One strong engineer can stabilize a system, raise the bar for code review, and unblock product work. One weak hire can soak up leadership time for months.

The broader market isn't making this easier. The U.S. Bureau of Labor Statistics projects 15% employment growth from 2024 to 2034 for software developers, quality assurance analysts, and testers, compared with 3% for all occupations, with about 129,200 job openings annually over the decade according to this software engineering job market overview. Demand isn't the issue. Competing for the right people is.

A startup can't usually win by acting like a large company with fewer resources. If you copy a bloated enterprise process, you'll move too slowly. If you swing too far the other way and improvise everything, you'll make inconsistent decisions and lose strong candidates.

Effective results come from a disciplined, lightweight process. I favor hiring systems that are structured enough to compare candidates fairly, but small enough that the team can run them. A useful example is the LeaveWizard hiring process, which shows what a transparent, documented approach can look like when a company takes candidate experience seriously.

Practical rule: If your team can't explain every interview stage in one minute, the process is probably doing too much.

Most startups don't fail to hire because they lack inbound volume. They fail because they don't know what signal they're looking for. They over-index on pedigree, ask vague interview questions, and let "seems smart" replace evidence.

The answer isn't more hustle. It's a playbook. Define the role precisely. Source in channels where signal is higher. Screen with real work, not trivia. Run an interview loop that tests collaboration and judgment. Then close well and onboard deliberately.

That's how small teams compete.

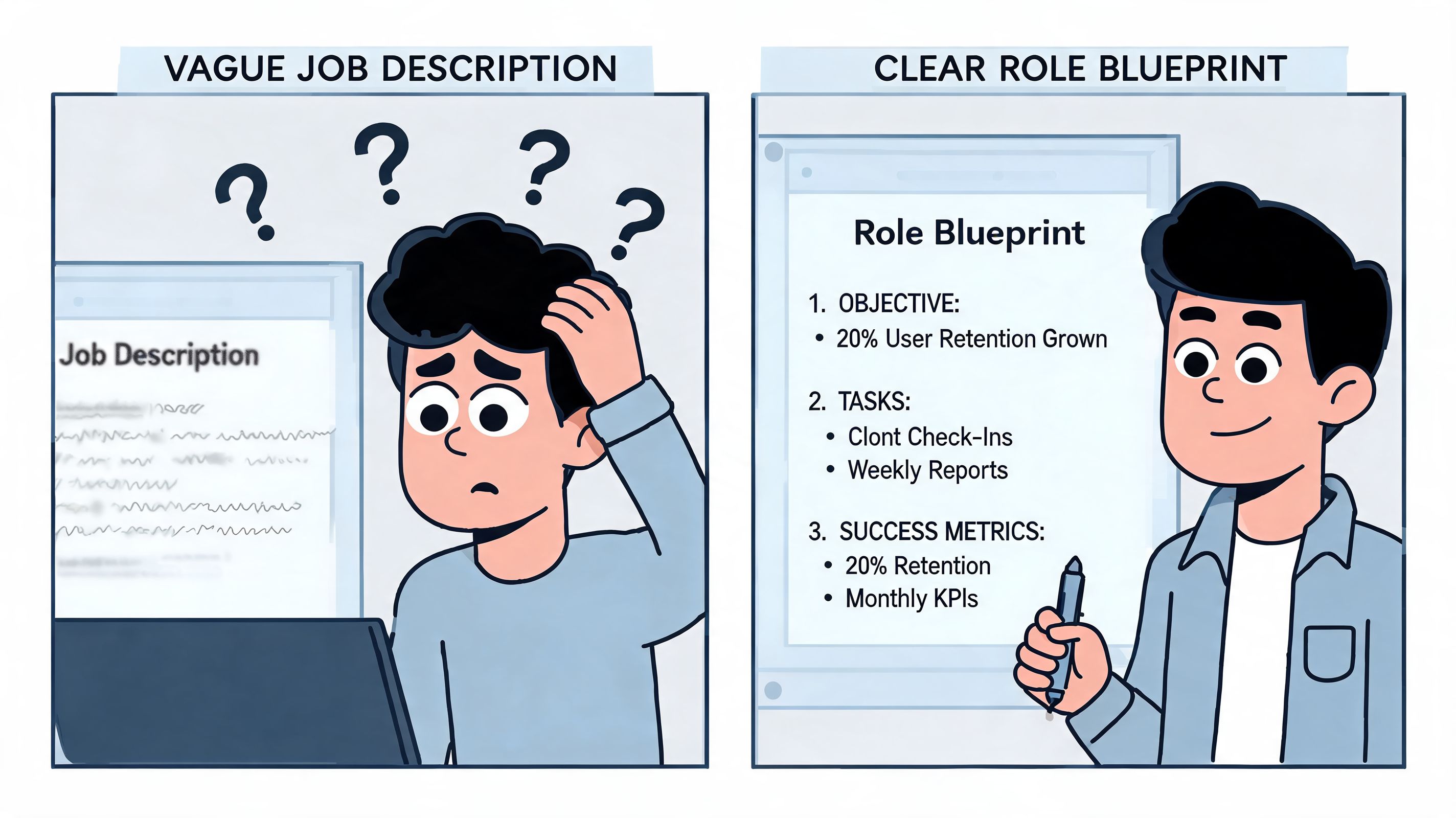

The most common hiring mistake is writing the job post before defining the job. A vague spec creates vague sourcing, vague interviews, and messy debriefs. By the time the team realizes they were hiring for different things, they've already wasted candidate time and their own.

That mistake matters more now because in the 2025 software engineering market, more than half of all advertised roles are above the senior level, which reflects an emphasis on people who can create impact quickly according to The Pragmatic Engineer's 2025 market analysis. When you're hiring for experienced engineers, ambiguity is expensive. Senior candidates will spot it immediately.

Before sourcing, write a one-page scorecard. Not a marketing job description. A real operating document.

Include these five parts:

Mission of the role

What problem does this person solve in the next year? "Own backend reliability for our core product" is useful. "Help build advanced solutions" is not.

Must-have competencies

Separate hard requirements from nice-to-haves. If the role needs someone who has managed a scaling monolith, say that. If microservices experience is optional, don't let it dominate the process.

Behavioral traits

Startups need engineers who handle ambiguity, communicate trade-offs, and unblock themselves. Those aren't soft extras. They're part of the job.

Failure modes to avoid

Write down what bad looks like. For example: needs heavy task definition, optimizes for elegance over delivery, or struggles with cross-functional communication.

Success outcomes

Define what "good in seat" means. If you can't describe what success looks like in the first few months, you aren't ready to interview.

A "Senior Backend Engineer" can mean two very different jobs.

One version is a maintenance-and-scale role. You need someone who can improve performance, reduce operational pain, and make a legacy architecture safer to change.

Another version is a new-systems role. You need someone who can establish service boundaries, choose sensible infrastructure patterns, and prevent over-engineering.

Those are not interchangeable profiles. Hiring managers often collapse them into one job description and then wonder why interviews feel inconsistent.

Hire for the work that actually exists, not the title you think sounds right.

Don't evaluate engineers by activity. Evaluate them by business-relevant outcomes and team fit. For a startup, a useful scorecard usually includes a mix of technical and operational expectations such as:

A role scorecard also cleans up debriefs. Instead of "I liked them" or "they seemed senior," interviewers can compare observations against agreed criteria. That alone removes a lot of noise.

The worst sourcing strategy for a startup is trying to out-volume bigger companies on public channels. You'll get applications. You won't necessarily get attention from the people you want.

A better approach is to build a sourcing mix based on signal, speed, and access to people who aren't running a formal job search. That's especially important because startups need access to the 85% of the tech workforce who are passive candidates, and curated marketplaces offer a discreet way to reach that talent without public postings, as described in this guide to hiring software engineers.

| Channel | Candidate Quality | Access to Passive Talent | Speed to Hire | Hiring Manager Effort |

|---|---|---|---|---|

| Public job boards | Broad and inconsistent | Low | Slower when volume is high | High due to screening load |

| LinkedIn outbound | Can be strong with focused outreach | Moderate | Moderate | High because messaging and follow-up take time |

| Agency recruiters | Varies widely by recruiter and role | Moderate | Can be fast if the recruiter understands the brief | Moderate, but calibration still matters |

| Employee referrals | Often high trust and context | Moderate | Often fast | Low to moderate |

| Curated marketplaces | Higher signal when vetting is real | High | Faster when the pool is pre-qualified | Lower because screening starts later |

Public postings still have a place. They're useful when you need broad discovery, when your employer brand is strong, or when the role is likely to attract people already interested in your company. They also help with transparency. Good candidates want to see what you're hiring for.

But public inbound has a known downside. It creates review work. A startup can burn a surprising amount of manager time sorting through applications that look adjacent but aren't close enough.

Passive candidates are often stronger because they're making a selective move, not spraying applications. They usually ask sharper questions about roadmap, management quality, and technical direction. That can feel harder in the moment, but it's a good sign.

Discreet channels matter here. Candidate-centric marketplaces and warm-network sourcing let you reach employed engineers without requiring them to publicly raise their hand. If you're trying to learn more about that motion, this passive candidate sourcing guide is worth reading.

One practical option in this category is Underdog.io, a curated marketplace where vetted candidates create profiles and companies reach out when there's mutual fit. For a startup, the main value isn't just access. It's reduced noise compared with broad inbound.

I wouldn't rely on one channel. I would combine a few on purpose:

The startup advantage isn't budget. It's specificity. If you can clearly explain the problem, the team, and why the role matters, you'll win candidates who care about ownership more than logo prestige.

A weak screening process creates two bad outcomes at once. It filters out strong people who don't want to jump through nonsense, and it pushes weak people into later rounds where the cost is higher.

The fix is simple. Keep screening short, role-relevant, and easy to understand.

The first screen should confirm alignment. The second should test foundational skill.

The alignment screen is usually a short conversation. Cover motivation, role expectations, communication quality, and practical constraints. You don't need trick questions. You need to know whether the candidate understands the role and whether the role makes sense for them.

The technical screen should test a slice of the actual job. For a backend engineer, that might be a focused debugging discussion, a code review exercise, or a short technical call around APIs, data modeling, and production trade-offs. For a frontend engineer, it could be component design, browser behavior, or state management decisions.

Skip abstract brain teasers. Skip generic "gotcha" puzzles that reward memorization. Skip take-homes that feel like unpaid consulting work.

A good screen answers a narrow question: does this person have enough baseline skill and communication ability to justify a full loop?

Here are better screening patterns:

Respect for candidate time is part of evaluation. Strong engineers notice when your process is sloppy.

A useful framing for founders is this guide to not wasting an engineer's time in interviews. It reflects a principle that matters in competitive hiring. Candidates are evaluating your company while you're evaluating them.

One of the most practical ideas in technical screening is encouraging candidates to practice the format before the loop. Data from over 10,000 interviews shows that completing at least 5 mock technical interviews before the live loop can lead to nearly 2X higher pass rates, because performance becomes more stable and more reflective of actual ability, according to interviewing.io's technical interview practice analysis.

That doesn't mean you should coach candidates on your exact questions. It means you should want a process that surfaces true ability rather than interview rust.

A tight screen protects the team and gives candidates confidence that the rest of the process will be run professionally.

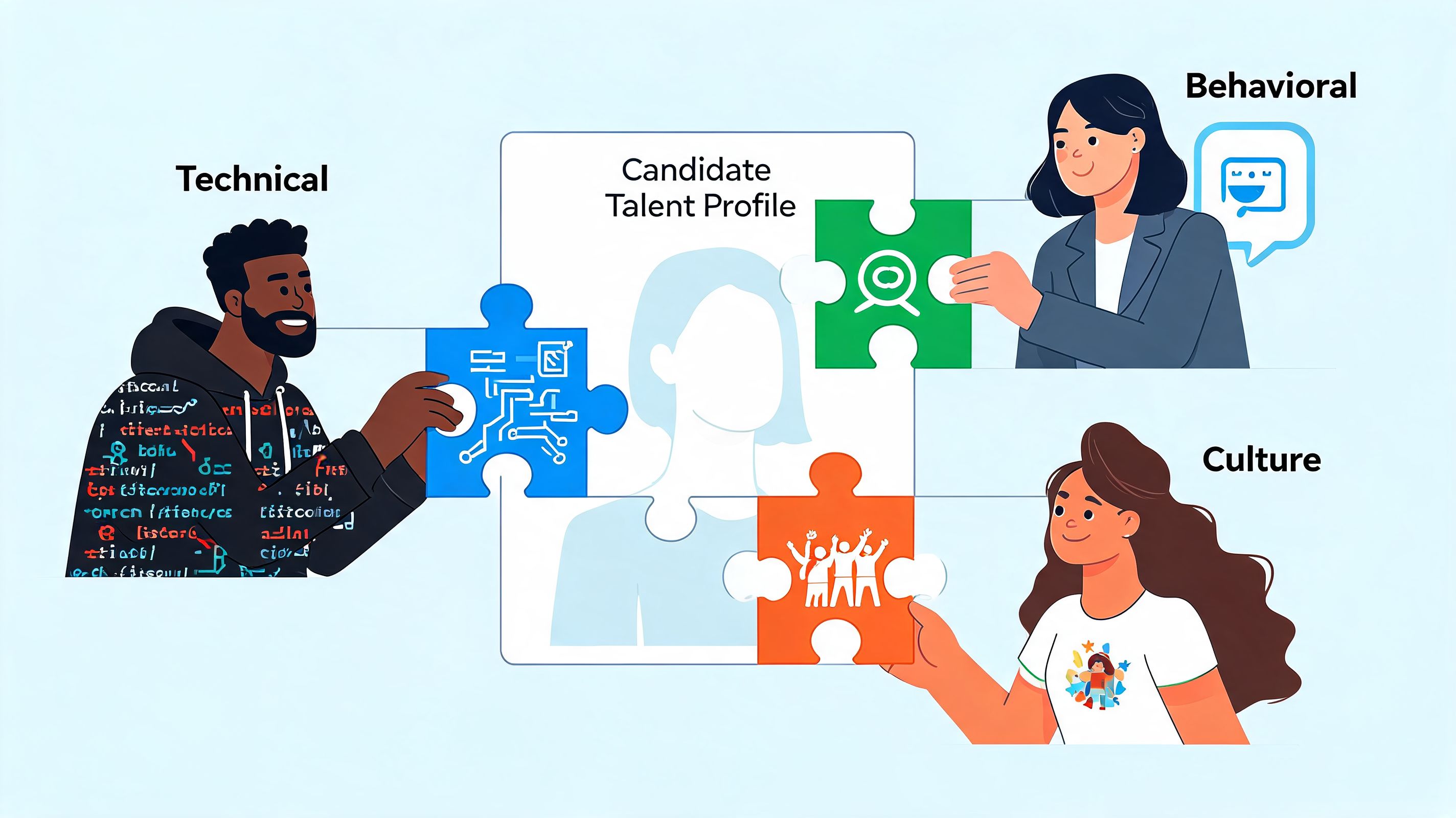

By the time a candidate reaches the full loop, the question shouldn't be "Are they smart?" You should already have enough evidence for that. The primary question is whether they can succeed in your environment, with your constraints, on your team.

That requires a loop that tests judgment, collaboration, and role-specific depth. Not just raw recall.

A well-run loop usually has four parts.

First, a practical technical interview. For a senior backend engineer, I want to hear how they approach reliability, schema changes, service boundaries, and failure handling. If they jump straight to tooling without clarifying constraints, that's a warning sign.

Second, a collaborative coding or pair session. Not because pair programming is the whole job, but because it reveals a lot fast. Can they explain decisions? Do they accept feedback? Do they freeze when a small bug appears?

Third, a behavioral interview with a hiring manager or founder. Use this session to test ownership. Ask about a project that went sideways. Ask what they changed when a first solution didn't work. Ask where they disagreed with product or leadership and how they handled it.

Fourth, a team conversation. Keep it grounded. The point isn't a culture test disguised as small talk. It's to see whether working together feels constructive and clear.

In system design, strong candidates usually start by clarifying the use case, constraints, and trade-offs. They don't rush into architecture theater. They decide what matters first.

In pairing, good candidates narrate enough to keep another engineer involved. They don't need to perform constantly, but they should make their thinking visible. If they hit friction, they stay engaged rather than defensive.

In behavioral interviews, the best answers are specific. They name the problem, the trade-off, the action, and the result. Weak answers stay abstract and polished.

The goal of the loop isn't to trap someone. It's to create enough realistic interaction that the job becomes visible.

Consistency is lost when every interviewer relies on separate standards. Calibration matters more than many organizations realize.

A simple structure helps:

One more point matters at startups. Interviewers should understand the company's actual engineering context. If your environment is messy, fast-moving, and customer-driven, don't build a loop that rewards only polished whiteboard answers. Build one that identifies people who can make progress under real conditions.

Most hiring teams treat the offer as administration. It isn't. It's part of the sale. The candidate is deciding whether your company is worth the risk, whether the role is as real as it sounded, and whether the team will support them once they join.

A startup doesn't need the flashiest package to win. It needs a credible one, delivered clearly.

A good offer conversation covers four things.

The first is cash compensation. Be direct and concrete. Don't make candidates decode ranges, caveats, or internal leveling logic in real time.

The second is equity. Explain what it is, why the company uses it, and how you think about upside and risk. Candidates don't need a sales pitch. They need honesty.

The third is scope. Re-state why you're hiring this role now, what success looks like, and who they'll work with.

The fourth is growth. Strong engineers want to know what they can own and how decisions get made.

If a candidate has questions about process and decision-making at the acceptance stage, practical resources like this job offer acceptance guide can help them think clearly about the trade-offs. That's good for both sides. Informed candidates make better long-term decisions.

Closing calls go poorly when companies slip into urgency without conviction. "We need an answer quickly" is not a compelling reason to join.

What works better is specificity:

Candidates accept startup risk when they believe the company knows where it's going and why they matter in getting there.

A strong process can still fail if the first month is chaotic. Startups often hire carefully, then onboard casually. That's backwards.

A useful onboarding plan has three layers.

Give the new engineer enough structure to orient without overwhelming them.

Expand ownership carefully. Don't load the new hire to full capacity on day one just because the backlog is long.

That pacing matters. Teams that allow 20% to 30% slack time for learning and experimentation report 15% to 20% higher creativity and 25% lower burnout, according to this Business Insider summary of software engineering hiring and productivity trends. Good onboarding builds capability before it maximizes output.

The engineer should understand the product, know the key relationships, and own a meaningful part of the system. They should also know how success is measured.

A simple framework works well:

| Timeframe | Focus | Manager responsibility |

|---|---|---|

| First weeks | Context and confidence | Reduce ambiguity and create early traction |

| First month | Guided ownership | Increase scope while keeping support close |

| First quarter | Independent contribution | Confirm strengths, close gaps, and set next goals |

The hidden value of onboarding is retention. Engineers stay when they feel effective, trusted, and connected to real work. They leave when the role they accepted doesn't match the role they entered.

Hiring software engineers well isn't one decision. It's a chain of decisions. Role clarity affects sourcing. Sourcing affects interview quality. Interview quality affects closing. Closing affects onboarding. If you want better outcomes, tighten the chain.

If you're hiring at a startup and want a cleaner way to reach vetted engineering talent without turning your team into a full-time recruiting function, Underdog.io is worth considering. It gives companies a way to connect with startup-oriented candidates through a curated marketplace, which can be especially useful when you care more about fit and signal than raw applicant volume.