You’re probably here because an AI project stopped being a slide deck and became a hiring problem.

Maybe you need someone to ship the first LLM feature into your product. Maybe your existing engineers built a prototype, but nobody trusts it in production. Maybe applicants say “AI” on every resume, yet very few can explain how they’d handle retrieval quality, latency, evaluation, or user-facing failure modes without hand-waving.

That’s a key challenge when you need to hire ai engineer talent at a startup. The title sounds clear until you start interviewing. Then you realize the market bundles together very different people: model builders, infra specialists, and product-minded implementers. If you don’t separate those profiles early, you lose weeks on the wrong candidates and usually end up rewriting the role.

The startup version of this problem is sharper. You don’t have a giant budget, a long bench of domain experts, or room for a bad hire. You need someone who can work with ambiguity, ship under constraints, and make sensible trade-offs when the model, the product, and the data all need work at the same time.

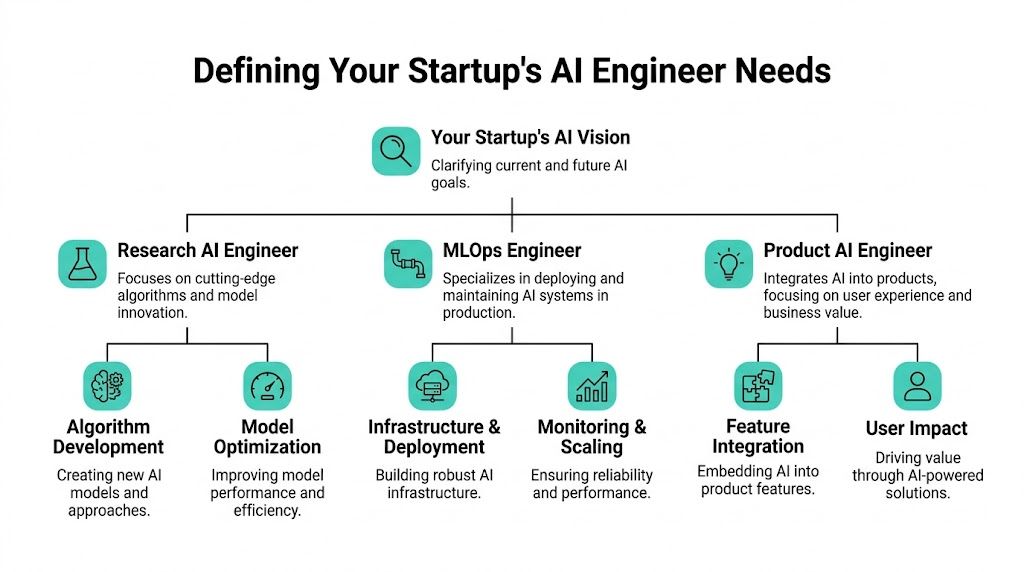

Most founders start too broad. They write “AI Engineer” when they need one of three different profiles.

That confusion gets expensive fast because AI demand isn’t uniform. Generative AI Engineer roles have seen a 7x growth in job postings, and 35 times more non-engineer IT roles now require generative AI skills, which is one reason teams now need more cross-functional judgment, not just raw model-building skill, according to METR’s analysis of early-2025 AI work and hiring signals.

Don’t begin with the stack. Begin with the business bottleneck.

Ask these questions first:

Are you trying to launch an AI feature fast

If yes, you usually need a Product AI Engineer. This person can wire models into a workflow, shape prompts and evaluation loops, build guardrails, and make the feature usable for customers.

Are you trying to make AI systems reliable in production

Then you’re closer to an AI Infrastructure or MLOps Engineer. This person thinks in pipelines, observability, deployment, cost control, rollback paths, and uptime.

Are you trying to discover something novel

That points toward an Applied AI Scientist or research-oriented engineer. Startups often overhire here. Unless your advantage comes from proprietary modeling work, this is rarely the first AI hire.

Practical rule: Hire for the failure mode you already have. If demos work but production breaks, you need infrastructure. If infra is stable but the feature feels useless, you need product-minded AI talent.

A simple way to scope the role is to map it to what success looks like after six months.

| Archetype | Best fit for | What they usually own | Common hiring mistake |

|---|---|---|---|

| Product AI Engineer | First AI features, copilots, search, workflow automation | Model integration, evaluation, UX trade-offs, prompt and retrieval design | Hiring a researcher who doesn’t care about user outcomes |

| MLOps or AI Infrastructure Engineer | Production hardening, reliability, scaling, deployment | Pipelines, monitoring, serving, model lifecycle, infra decisions | Hiring a notebook-heavy builder with weak operational depth |

| Applied AI Scientist | Novel methods, experimentation, domain-specific modeling | Model experimentation, adaptation, performance tuning, research loops | Hiring this role before the product problem is even clear |

Founders often ask for one person who can do all three. That person exists, but they’re hard to find, expensive, and often don’t want a role that blends too many unrelated responsibilities.

A better approach is to define the primary job and the tolerated gaps. For example:

The shortest useful job description is not a list of tools. It’s a sentence like: “We need an engineer who can turn a messy LLM prototype into a product feature customers trust.” That framing attracts the right people and filters out candidates who only want to tinker.

If you need a quick mental model for how startup teams break down these roles in practice, this guide on what an AI engineer does in startup hiring is a useful reference point.

The strongest AI candidates usually aren’t searching job boards all day. They’re employed, selective, and overloaded with generic outreach.

That’s why broad volume recruiting works poorly here. Between January and April 2025, AI-related job postings more than doubled to nearly 139,000, and even after a slight cooling, AI roles still represented 10–12% of all software jobs, which shows AI hiring has become embedded rather than temporary, according to Aura’s 2025 AI job market data.

If you need to hire ai engineer talent, the goal isn’t “more applicants.” The goal is fewer, better conversations.

The most useful sourcing channels tend to be:

Open-source contribution trails

Look at people maintaining or contributing to tooling around inference, evaluation, vector workflows, orchestration, or model serving. Commit history often tells you more than a polished resume.

Kaggle and technical communities

Not because competition winners automatically make great startup hires. They don’t. But serious participation can reveal how someone approaches data, experimentation, and iteration.

Conference papers and talks

This is useful for research-heavy roles, but also for finding applied engineers who can communicate clearly about trade-offs.

Warm networks from operators

Your best source is often a staff engineer, ML lead, or founder who knows who gets things shipped.

Most outreach fails because it sounds interchangeable. Good candidates can tell when you copied a template and swapped in their name.

A message worth sending usually includes:

Why them specifically

Mention the project, repo, paper, deployment story, or technical choice that got your attention.

What problem is genuinely hard

“We’re building with AI” is weak. “We need to make retrieval quality dependable inside a user-facing workflow” is better.

What they’d own

Senior people want scope, not just tasks.

Why a startup is an advantage

Access to product decisions, faster shipping cycles, tighter feedback loops, and fewer layers between engineering work and customer impact.

The best startup recruiting message doesn’t sell perks first. It sells a hard problem, clear ownership, and a reason this person should care.

If your team is cleaning up process while scaling outreach, a practical roundup of best tools for recruiters can help you decide where automation helps and where it just adds noise.

There’s a point where more outbound doesn’t improve results. It just creates more screening work.

That’s where curated channels can make sense, especially when you need passive candidates. A marketplace like passive candidate sourcing through Underdog.io is useful when you want pre-vetted startup talent and prefer mutual-interest introductions over cold outreach at scale. That’s different from posting a role and waiting for keyword-matched applications.

The trade-off is simple. Broad channels maximize reach. Curated channels improve signal. For AI hiring, signal usually wins.

Traditional coding interviews miss too much for AI roles.

They tell you whether someone can solve a bounded problem in an artificial setting. They rarely tell you whether the person can debug a brittle retrieval chain, choose a useful evaluation strategy, reason about model failure in production, or push back on a bad product requirement.

The problem is bigger now because hiring integrity has changed. The rise of strong AI tools has pushed many candidates to generate resumes and solve interview tasks with assistance, and one expert at the AI Engineer World’s Fair said hiring managers should operate under the assumption that “everyone is cheating with AI”, as discussed in this AI Engineer World’s Fair panel.

The right interview loop for AI hiring should test judgment under realistic constraints.

I like a four-part structure:

Each stage should answer a different question.

Don’t ask candidates to recite architectures from memory. Ask them to walk through something they built.

Useful prompts include:

Listen for operational detail. Strong candidates talk about data quality, user behavior, latency, fallback behavior, observability, and edge cases. Weak candidates stay abstract and tool-centric.

A good live interview isn’t “solve this algorithm.” It’s “here’s a product problem, how would you structure it?”

For example, give them a scenario:

Your team wants an internal assistant over messy documentation. Answers are inconsistent. Trust is low. What would you investigate first?

Good candidates won’t jump straight to “fine-tune a model.” They’ll ask about data shape, retrieval quality, expected user behavior, evaluation criteria, failure costs, and whether the problem should even be solved with a generative approach.

Candidates who can structure ambiguity well usually outperform candidates who only speak fluently about tools.

Both can work. They measure different things.

| Format | What it reveals | Where it fails |

|---|---|---|

| Take-home project | Writing quality, architecture choices, depth of thought | Easy to over-assist with AI tools or overinvest time |

| Live pair session | Real-time reasoning, debugging, communication | Can disadvantage people who need more time to think |

| System walkthrough | Practical ownership and operational maturity | Depends on the candidate having relevant prior work |

For startup hiring, I prefer a short work sample plus a live review. Let them build something constrained, then ask them to explain every choice. The review often matters more than the artifact.

You won’t eliminate it. You can make it much less useful.

A few methods work well:

Ask for iteration, not just output

Have the candidate change constraints midstream. Add a latency cap, a noisy dataset, or a stricter product requirement.

Use live explanation checkpoints

Stop and ask why they made a specific choice. People who outsourced the thinking usually struggle here.

Restrict tool use only when it matches the job

If the job allows AI assistance, test how they use it responsibly. If a task is supposed to reveal raw reasoning, say so clearly and scope it accordingly.

Probe for failure

Ask where their design will break. Real builders usually know.

One last point. Don’t turn the process into a trap. Great candidates won’t tolerate an adversarial loop. The goal is to verify authenticity while still giving serious people a fair shot.

If you’re trying to hire ai engineer talent with a startup budget, compensation has to be precise and the pitch has to be honest.

The salary market is tough. Workers with AI skills command a 56% wage premium over peers without those skills. The median annual salary for AI roles reached $156,998 in Q1 2025, and 35% of companies cite high salaries as their biggest recruitment hurdle, according to PwC’s AI Jobs Barometer.

Founders sometimes react to AI salary pressure in one of two bad ways. They either underpay and hope the mission carries the deal, or they throw out a top-end number without being ready to explain scope, equity, and growth.

Neither works well.

A strong offer usually includes these pieces:

A clean salary position

Not necessarily the highest, but credible for the role and market.

Equity explained like an adult conversation

Good candidates will ask how you think about upside, dilution, and expected value. Be ready.

Clear ownership

“You’ll own our AI roadmap for product search and customer-facing assistants” is stronger than “you’ll help with AI initiatives.”

Access

At a startup, direct access to founders, product decisions, and fast shipping can outweigh some cash gap if it’s real.

A startup usually loses if it tries to copy a big tech package without big tech resources. It can win by offering a different shape of role.

That includes:

Visible impact

The engineer can see the feature ship, learn from users, and influence priorities.

Broader problem ownership

Strong candidates often want to shape the system, not just one narrow layer.

Intellectual range

Many AI engineers enjoy working across model behavior, product experience, and infrastructure rather than sitting in a silo.

If your only pitch is “we also use AI,” you’ll lose. If your pitch is “you’ll define how AI actually works in the product,” you have a shot.

The close usually fails before the offer goes out. It fails when the candidate still has unanswered questions about strategy, resourcing, or seriousness.

Use the final conversations to remove uncertainty:

If you need a practical framework for packaging salary, equity, and benefits clearly, this guide to startup compensation and benefits is a useful reference.

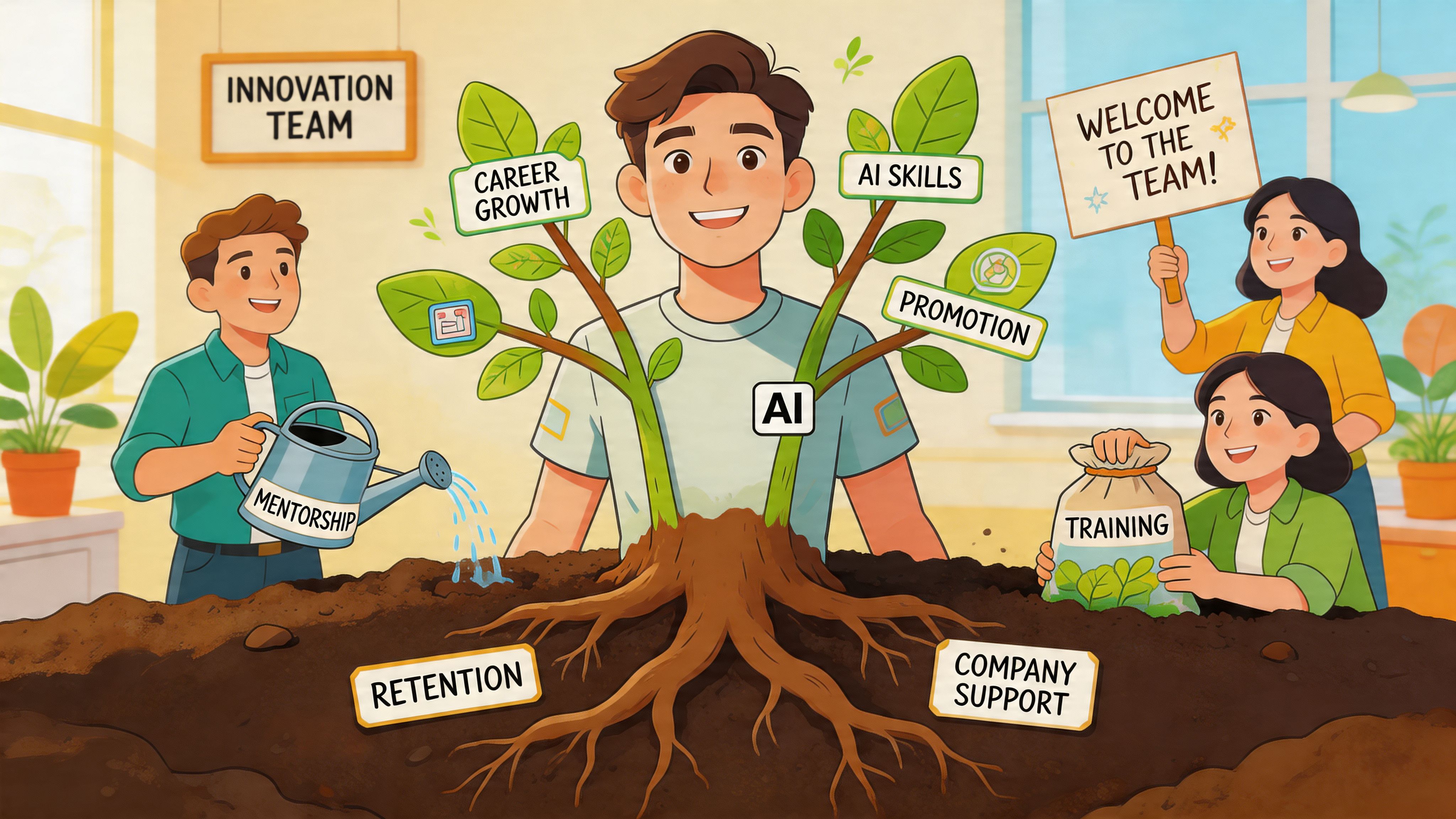

A bad AI hiring process is expensive. A bad AI onboarding process is worse.

You finally close someone strong, then they spend the first few weeks waiting on permissions, guessing which dataset matters, and discovering nobody agrees on what “good” looks like. That’s how startups burn trust with expensive hires.

The market makes this more important. A projected 2026 benchmark puts AI talent demand ahead of supply by 3.2:1, and human-led vetting models that accept only about 5% of applicants can reduce mismatch rates by 40-50% compared with algorithmic matching alone, while also improving offer acceptance by 30% in high-growth startups, according to Second Talent’s AI shortage statistics roundup.

AI engineers need context faster than most hires because they work across multiple messy systems at once.

A solid onboarding plan includes:

Immediate access to data and tooling

Not eventually. In the first days.

A defined success metric

It might be model quality, adoption, reliability, cost control, or a product KPI. But it needs to exist.

A map of constraints

What data is messy, which systems are fragile, where compliance matters, who owns what.

Customer exposure

Product AI work improves when engineers see the actual user pain, not just a ticket summary.

AI people leave when the job turns into glue work with no ownership. They also leave when every hard problem gets treated like a model problem instead of a business problem.

The best retention lever is usually thoughtful scope.

Create a role where they can:

That last point matters. Some of your strongest AI hires want technical leadership, not people management. Build paths for that early.

Retention usually looks like product clarity, technical trust, and room to grow. Companies often mistake it for snacks, perks, or conference budgets alone.

Even good teams lose people. What matters is whether you learn anything from it.

If you want a practical starting point for that process, these exit survey examples to improve employee retention are useful because they push beyond generic “why are you leaving” questions and help uncover management, scope, and growth issues before they spread.

Hiring method and retention come together at this point.

When a startup relies only on keyword screens or bulk applicant flow, it tends to optimize for speed at the top of the funnel and pay for it later in mismatch, weak motivation, or poor startup fit. Curated hiring flips that. It adds judgment earlier, before the interview loop and long before onboarding.

That matters in AI roles because the job isn’t just technical. It requires startup tolerance, product sense, and the ability to work through ambiguity without overengineering.

A curated process helps when it filters for:

| What matters | Why it affects retention |

|---|---|

| Authentic production experience | People ramp faster and need less role correction |

| Startup fit | Candidates know what pace, ambiguity, and ownership feel like |

| Mutual interest before deep interviews | Less wasted process on candidates who were never serious |

| Human review of nuance | Better match on role shape than keyword-only screening |

Used well, curated hiring doesn’t just shorten the search. It improves the odds that the person who joins will still be effective and engaged months later.

If you’re hiring for AI roles and don’t want to build that screening layer from scratch, Underdog.io is one option to consider. It’s a curated marketplace that connects startups with pre-vetted tech candidates and uses human review rather than pure algorithmic matching, which is especially relevant when the role mixes product judgment, engineering skill, and startup fit.

If you need to hire ai engineer talent without wasting months on the wrong profile, start with role clarity, build a vetting loop around real work, and compete on ownership as much as compensation. If you want a curated pipeline instead of another pile of resumes, Underdog.io is worth a look.